Quick piece of trivia for those who might find this useful.

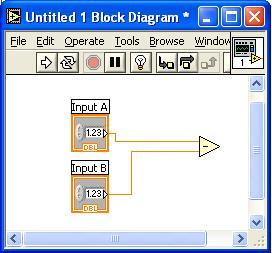

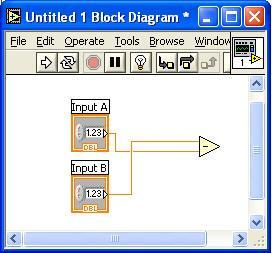

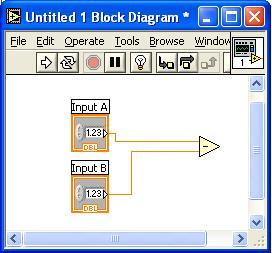

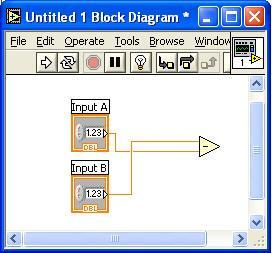

So you wired up a node, maybe even debugged it, and found out DOH! I wired them backwards (especially for a subtract primitive). There is a quick shortcut you can file away.

If you want to swap the wiring without deleting the wires and rewiring it, hold down the Control key (on Windows or similar key on the other platforms) and click on the top or the bottom wire terminal on the subtract primitive. Voila, the terminals get swapped.

Note: If you are not using the aut0-tool, you must have the wiring tool selected when you do this.

I became a "professional programmer" when I was 17. "Professional" because a freeware app I made got put in a floppy-disk based periodical and I got paid money for it. How cool. (

Holy cow I found it KeyCapsGS - first published in 1988 I believe).

So since 1988, I've had to deal with the question of "when the heck is software done?" Well, metaphorically, software is never actually done. There's always 2.0, 3.0. But when you're working on 1.0, when do you decide to ship it?

As with all art forms, it depends on who's looking at it. I hope the software that controls the brakes of my car has zero bugs in it. Then again, all I really want is that there are no bugs that ever prevent me from stopping. So even safety critical software has some sort of acceptable bug threshold. If my screensaver applet crashes when I change modes, I may not even care.

So my answer is: you ship it when the quality level is acceptable to your customers (and possibly regulatory bodies that have some sort of say in the matter)

The concept of "acceptable level of bugs" doesn't

sit with some people well. But again, it's a matter of perspective. The article quoted a study that found

...two separate people dissected one particular Microsoft program and found out, to their shock, that it was over 2,000% larger than it should have been.

Maybe Microsoft programs are indeed "bloated". But so what? Probably 100 people in the world really care enough not to buy their software. But if Microsoft went and rearchitected some application so that it met those folks' mythical standards, they probably would lose tens of thousands of sales to satisfy those 100 people. So calling software "done" isn't just a technical decision, it's a business one.

So the key is to understand your customer base. Understand what they need. Understand what they want your software for. Is it being used in a safety critical system? Is it being used in a screensaver? Your biggest risk in software is misunderstanding the quality that the customer expects and giving them something different. If they expect more and you don't give it to them, they'll avoid it like the plague. If they expect less and you give them more, you may have wasted development time that a competitor used to snatch those customers away.

LabVIEW 7.1 has a great reputation and we're all quite proud of that release. But not all releases hit the "meet customer expectations" mark. I presided over a version of LabVIEW that had a bug "fix" in it that caused an even bigger bug than the one it fixed. Whoops. (Fortunately we pulled the release and fixed it before anyone noticed) But in the end, our goal is always to make you happy.

In my

End of the Free Lunch post, I mentioned how desktop CPUs are moving toward a multiprocessor architecture that will require major changes in the way programmers write software in order to attain higher performance. Well, this isn't just a trend in the desktop market. The embedded systems market is also seeing a proliferation of multi-CPU devices. At first, they were exotic boards that used

parallel arrays for signal processing. Then, you saw hybrid systems with

two chips (sometimes in the same package), commonly used in cell phone applications. Now you are seeing rather standard architectures with dual cores, like a recently announced

PowerPC chip for military, telecom, and other embedded markets.

What does this mean for embedded systems? Well, opinions seem to vary. Enea encourages asymmetric multiprocessing (i.e. an OS running on each chip and different programs running on different chips) in their article "

Who's afraid of asymmetric multiprocessing"

In addition, SMP is not well suited for many applications that require predictable, real-time response. It does not scale well to very large systems, and it lacks fault-tolerant features.

Firing back in the

same magazine, QNX says:

By running only one copy of the OS, SMP can dynamically allocate resources to specific applications rather than to CPU cores, thereby enabling greater utilization of available hardware. It also lets system tracing tools gather operating statistics and application interactions for the multi-core chip as a whole, giving developers valuable insight into how to optimize and debug applications.

So whom do you believe? Well, it probably depends upon your application and your viewpoint on the world. Coming from a developer on a huge code base, I'm firmly in the SMP camp. Why? Well, first, LabVIEW already runs well in an SMP system. We put in a lot of work to make our application nicely multithreaded and I want our customers to be able to take advantage of it. The second is that moving an application from a multithreaded environment to an asymmetric multiprocessing system (AMP) requires a change in architecture. Changes in architecture are hard...

Really hard. So I'm not "afraid" of AMP, but I'm certainly "annoyed" that I'd have to contemplate it.

Yes, SMP will never work across two different types of processors. That's what we've got

LabVIEW 8 with Distributed Intelligence for...